4.0 WORKFLOW AND DATA MANAGEMENT

Managing the process of acquiring and using data via a MLS survey requires extensive knowledge and experience. Figure 2 represents a typical workflow for MLS acquisition and processing, highlighting the key steps. Additional steps and procedures can be required depending on the applications of interest and end user data needs. Also, data is often processed using several software packages (both commercial, off-the-shelf (COTS)) and custom service provider) in order to produce the final products. Finally, several stages will require temporary data transfer and backup, which can require a substantial amount of time due to the sheer volume of data.

Depending on the application and in-house capabilities of a transportation agency, certain steps of the workflow may be modified. For example, a transportation agency may choose to perform the modeling themselves and may only want the point cloud delivered. This will result in a lower initial price since the data provider will only complete the acquisition and geo-referencing portions. However, it will require that the transportation agency has trained personnel and appropriate software to complete the modeling work. (See Section 6).

Figure 2: Generalized MLS workflow, including interim datasets (blue cans).

The most difficult stages in the workflow from a management of data point of view are the early stages because they deal with the storage and processing of large volumes of point cloud data. In contrast later stages will use the data to develop measurements or models, which are more modest in size. Of course archival or backup steps may also involve large files. Each stage is described in more detail below.

Recommendation: Become familiar with MLS workflows and how data management and training demands vary by stage.

4.1.1 Data acquisition

Data acquisition refers to the process of collecting data using the mobile system directly. Typically information cannot be reliably extracted directly from this data because it needs further processing and refinement. A mobile LIDAR system will often record two channels of data: the 3D measurements from the scene relative to the vehicle—often referred to as intrinsic data, and the vehicle trajectory & orientation—extrinsic data.

4.1.2 Geo-referencing

Geo-referencing (also called registration) is the method by which intrinsic and extrinsic data are merged together to produce a single point cloud that is tied to a given coordinate system. To improve accuracy, this is usually accomplished through a post-processing step, after all possible information has been collected.

4.1.3 Post-processing

The post processing step includes basic operations that are typically performed automatically and with limited user input or feedback. Of particular relevance to the management of large LIDAR data sets are the operations of filtering and classification because they generally apply to each individual data point. That is, each point can be assigned a classification or filter value. This is in contrast to computations or analyzes (e.g., extracting curb lines), which generally do not alter the fundamental point-cloud information,

- Filtering – Mobile LIDAR systems typically operate at high speed and in uncontrolled environments. A significant amount of data may be collected that does not accurately represent the scene of interest and should be filtered out prior to use. For example, when the laser beam is directed towards the open sky (i.e., devoid of objects such as tree canopy or the bottoms of overpasses), no meaningful information is extracted. As another example, points obtained from passing vehicles may not be of interest for most applications and would need to be removed before further processing.

- Classification – Of great importance to many users is the notion of classification of a point cloud, meaning the assigning of each point to one of a group of useful categories or “classes.” For instance, a point could be classified as “Low Vegetation” or “Building”.

4.1.4 Computation/analysis

In this step meaningful high-level information is extracted from the lower-level data. The desired results depend significantly on the project’s overall goals. Furthermore, a number of options exist for analysis packages, ranging from general-purpose CAD systems to highly specialized or customized software. It is important to note that in most cases the information that results from this analysis step is much smaller in file size, and therefore much more easily managed within an organization’s standard IT procedures. However, the cost and labor required to produce this information can be substantial, and therefore, it is important to manage this effort with a well-developed workflow process.

4.1.5 Packaging/delivery

The last stage completes the project and delivers the data. This is an important step that is sometimes overlooked. It is recommended that post-project reviews include feedback about the handling and utility of MLS data.

4.2 Models vs point clouds

Newcomers to LIDAR are often confused by the difference between models and point clouds (or 3D images). This section briefly introduces the distinction and highlights the important considerations. The procedures mentioned in the Guidelines are focused on point cloud geometric accuracy evaluation. However, point clouds can be processed into line work or solid models consisting of geometric primitives for use in CAD or GIS. In the case of the principal deliverable being a (point cloud) derived model, it may be more appropriate to assess the accuracy of the final model rather than the point cloud. This can be done following procedures currently in place in many agencies for similar data sources. Further, in many cases, a model may be derived from data from several techniques (e.g., combined bathymetric mapping with MLS and static terrestrial laser scan (sTLS data).

The use and application of models varies widely depending on application and is also an area of intense research and product development. For these reasons, additional details on this topic are beyond the scope of this document.

4.3 Coverage

Because the data collected with a LIDAR system can be much larger than that obtained by other methods, the temptation is to collect the minimum amount of data that appears to satisfy the initial project goals. However the incremental cost of acquiring additional data is much less than the cost of a return trip to the field to collect information that was missed the first time. Typically, it is easier to over-collect and be certain to have all the data that might possibly be needed, even though the field time and storage requirements may be marginally higher. It is better to plan to collect a larger area than what is needed in order to mitigate possible gaps in data coverage.

Recommendation: With the costs of mobilization and operation relatively high, be sure to collect all of the data that is needed the first time.

4.4 Sequential & traceable processes

Whenever possible, all work should flow in one direction through a prescribed process. For instance, once users have begun extracting dimensional information from a data set any re-registration (i.e., change in the point cloud coordinates) should be avoided. Additionally, each step in the data chain should be clearly reproducible: different operators starting from the same point should be able to arrive at the same result. This is often accomplished via clear procedures and the use of automated software tools (with standard or recorded settings.) One best practice is to record a ‘snapshot’ of all information at particular points in the workflow, along with any processing settings required to reproduce the step, and insist that further processing take place only using the latest set of information.

Recommendation: Incorporate ‘snapshot’ procedures into your workflows.

4.5 Considerations for information technology

It can be a challenge to integrate MLS data with an organization’s existing IT systems. Establishing and managing this process therefore requires thought and preparation. This section points out important considerations that should be included when developing workflows and updating IT management procedures. The goal is for MLS data to be effectively integrated into the IT infrastructure. The following discussion focuses on high-level concepts; more detailed recommendations and strategies can be found in Part II.

4.5.1 File management

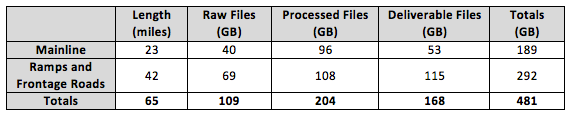

The physical location of and network connectivity to the data are important considerations since typical MLS files are large and complex. They can overwhelm IT systems that are not designed to handle the volume. Table 2 shows an example of file sizes (including imagery) for an Oregon DOT MLS project that consisted of 8 miles of the I-5 corridor (typically 2 Northbound and 2 Southbound lanes) using an asset management grade system (3B). In addition to the raw files, processed files and final deliverables require significant storage. Note that in addition to the main corridor, ramps and frontage roads require additional passes along the section resulting in much more than the 8-mile length in order to provide adequate coverage. For other projects, file sizes will vary greatly depending on the system used, the ancillary data (e.g., imagery) collected and the needs of the project. In addition to the original files, additional storage will be required for archives or backups, file revisions, etc. “File bloat” –the proliferation of large files derived from the originals—can also strain storage and network systems. Furthermore, if data is collected for a higher data collection category (e.g., 1A), overall file sizes may increase up to tenfold.

Table 2: Example of file sizes for a sample Oregon DOT MLS project for 8 miles of interstate with 2-3 lanes each direction.

Storing multi-terabytes of data in an organization’s IT system can be expensive and time-consuming. It is important to realize that while storage has become relatively inexpensive access to and management of that data may not be. Moreover, this situation is likely to continue for some time because MLS manufacturers are continually producing systems that operate faster and collect more data, offsetting gains in storage or networking technology. Costs for storing and sharing the data efficiently will increase with the number of staff that need access.

An important realization is that most of the data will never change once created or after the initial processing. Therefore, this data can be excluded from normal IT procedures, such as RAID protection, and backups. Doing so will significantly reduce the burden on IT systems and services, but must be done carefully to maintain integrity and utility of the data.

Recommendation: Expand IT policy and procedures to handle high-volume MLS data and make available to the entire enterprise.

4.5.2 Information transfer latency

The amount of time it takes to move data through a workflow can become a limiting factor in an organization’s ability to use MLS information effectively. Often large amounts of data must be moved – starting with the initial copying of the data from the source, including backups, snapshots, and archival processes; as well as in-use transfers across networks or into and out of software applications.

Recommendation: Minimize copying or movement of files with large amounts of data.

Recommendation: Schedule automated processing for overnight or offline operation.

4.5.3 Accessibility and security

The level of security required for a set of MLS data will depend on the application and the users’ policies and procedures. Most organizations already have security practices in place, and MLS data should be treated similarly. It is important to point out that none of the current popular file formats for LIDAR data storage support encryption, so any access restrictions must be handled at the network or organizational levels.

4.5.4 Integrity

Integrity refers to the aspects of the data storage that ensures the files have not inadvertently become corrupted, truncated, destroyed, or otherwise altered from the originals. Generally, integrity can be compromised in two ways: either through user error (typically deleting, renaming, or overwriting a file) or through hardware / software failures such as damaged or aged disks, network glitches, or software bugs. Strategies for maintaining integrity include backups, periodic validation, data snapshots, and permissions-based access.

Recommendation: Do not trust the OS to verify file integrity. Periodically verify your data.

4.5.5 Sunset plan

When MLS collections are used for projects, the data typically has a finite lifetime. Not only will the scanned scene change over time, but also the data formats and software applications will evolve. As the formats change, it becomes more difficult to convert older data into the newer formats, especially in cases where the destination format incorporates new technology or discoveries that are incompatible with the source formats. Further, maintaining archives is not trivial and may be costly, as data must be periodically validated and migrated to newer storage media as the older becomes worn out. Therefore, transportation agencies should implement a ‘sunset’ plan that specifies how long data is to be maintained, and at what levels of maintenance.

Recommendation: Include sunset provisions for MLS data in IT plans.

4.5.6 Software

MLS project workflows typically require use of several software packages, many of which are updated frequently (3-6 months). In general, easier to use software will cost more or may have reduced functionality. The type and amount of software packages needed depends on how much of the processing will be done in house. Data interoperability between these packages and between software versions (not just for point clouds) is also an important consideration. In many cases, the geometry of features may transfer effectively between packages, but attributes are lost. Finally, plugins can be obtained for many CAD packages to enable point cloud support directly within the CAD software, reducing the amount of training needed.

Recommendation: Research and evaluate software packages and ensure proper interoperability across the entire workflow prior to purchase.

Recommendation: Invest in training staff on new software packages and workflows.

4.5.7 Hardware

Processing point cloud data requires high-performance desktop computing systems. Graphic capabilities similar to those found on gaming machines can significantly improve efficiency. Additionally, investment in 64bit computing architecture is essential.